We are only at the beginning stages of a predictive SEO revolution, and how it will change the way we approach SEO. AI and machine learning are starting to have a tremendous impact on how agencies develop their SEO strategies. Take an in-depth journey into the data and lessons we learned in the last 4 years on SEO AI analysis.

Table of Contents

In our industry, there’s hardly time to really think about where strategic decisions for rankings come from. When we say we want to rank a website, we tend to make decisions based on what we already know about SEO. This seems obvious until we researched further on what exactly we, as SEO providers, are doing when we make those decisions.

While studying the factors that matter to SEO, the traditional routine is to place our observations under a microscope. We review the latest Google algorithm updates. We poured over hundreds of case studies to check what has worked and what hasn’t. We even watched YouTube videos, like everyone else, for outside tips and guidance.

We All Have a Predictive Mindset

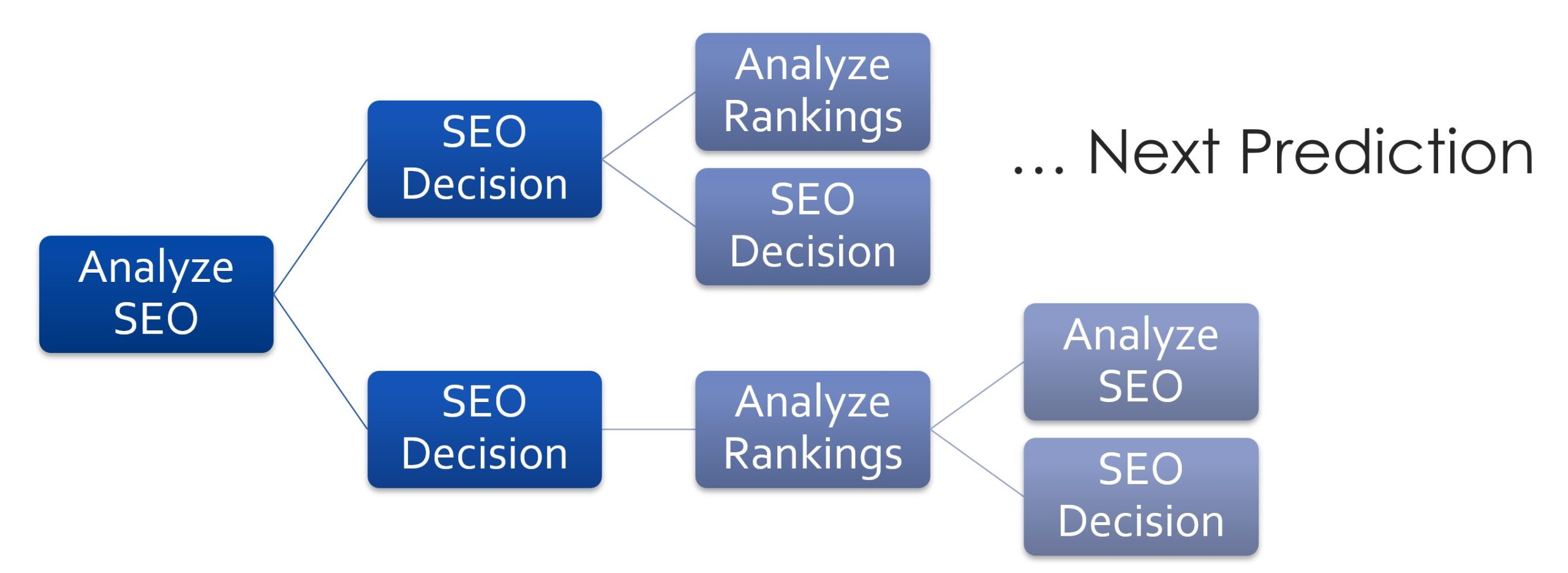

It came to our realization that in actuality, every SEO decision that we make is a branch in order to predict what is going to be the best possible outcome. When we say we are identifying issues with a website related to SEO, what we actually mean is that if we fix these issues, then theoretically it will have a positive impact on our rankings.

We found that every SEO decision is based on a prediction. That’s all it is. We actually don’t know what the result of our actions is going to be until we measure the rankings after Google has seen our changes and determined the value of the outcome.

For instance, if we optimized titles and meta descriptions, or wrote content that’s semantically related to the keywords we want to target, then that should lead to better results. At least in theory.

But if that’s the case, then why is it that the results are not always guaranteed to be certain? Sometimes rankings don’t go up, or in worst-case scenarios, ranking can even go down.

As SEO experts, we sometimes get perplexed by why it is that Google has not taken our changes into consideration or why it is that our strategies, despite our best attempts, have led to less-than-expected results.

We tend to end up pondering, reviewing, watching more YouTube videos and reading more Reddit discussion boards, and asking more people about what is going on until we arrive at a new answer.

When we finally conclude our new findings, we make another prediction with the hope to yield better results.

Now, over time, a lot of tools have been developed to counter at least the most common or the most basic of SEO issues. Tools like SEMRush can tell us if titles and meta descriptions are filled in, or provide hundreds of automated suggestions. However, the information is often missing context, so it means fixing the issues these tools provide won’t necessarily change the keyword we want to rank for the better.

There are also tools that can scan your entire website and identify issues that are considered detrimental to SEO. However, automation scans are classified as “unintelligent bots”. We’ve never experienced traffic pouring into a website or becoming overwhelmed with keyword positions as a result of fixing automated issues. That’s when it really hit us.

We began to realize that what is actually happening is that decisions need to be made to reach a higher level of cognitive understanding of how Google is working. The challenge is that Google’s search engine is getting more complex and smarter all the time.

One area that Google can’t really get away from is the fact that Google’s search engine has to provide relevant results. And it’s this relevancy factor that keeps it grounded with the human side of results.

As an algorithm, which ultimately defines Google’s search, we came to the conclusion that Google’s goal over the years was trying to mimic the best experience a person can have when searching. It seems self-explanatory, but we noticed most SEO agencies don’t look at it that way.

Results have to be relevant, the important trends have to be near the top and two people can get different results to achieve the “best experience”. However, to an SEO expert, knee-deep into either the technicalities, Google guidelines, or the hundreds of videos that we have watched, this mindset becomes detached from us.

The issue is that there are more than 300 different factors related to rankings. Which ones are the most important? Ranking factors can be overwhelming to manage.

Branch Prediction SEO

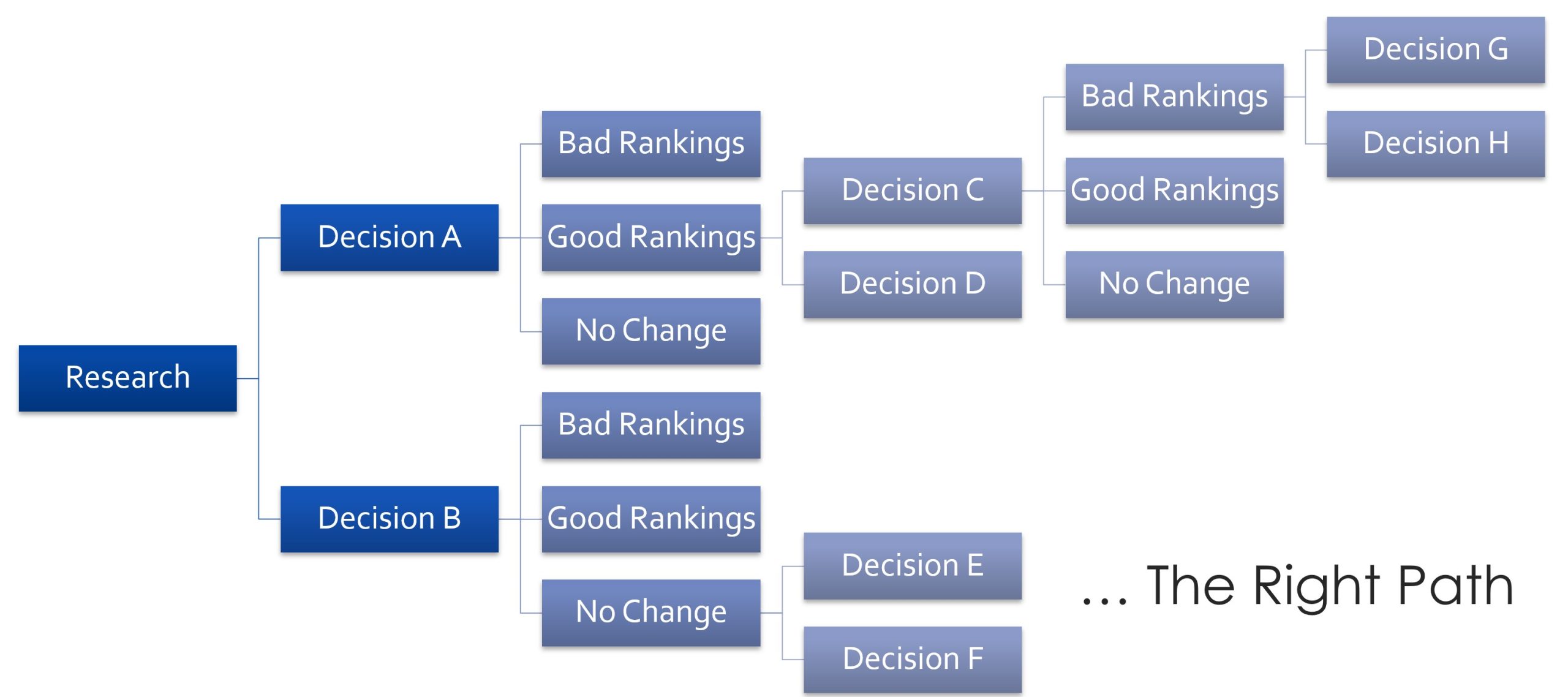

Because of all the complexities, when we make our SEO choices, we would like to know we are doing so in the best interest of maximizing rankings. In fact, when we decide on an SEO strategy, we are setting a probability in our minds.

In actuality, we wager, like a game of poker, where we play the cards that we are dealt and try to win the game. At each turn, we are faced with a decision, by which we branch to go down the path that we predict will provide the best results.

The best of us will make educated guesses based on experience, observations, tools, and past results. The worst of us might as well be gamblers.

In a game of probabilistic statistics and decision-making, there’s no one better at this game than machines and AI in particular. Artificial intelligence, especially machine learning, is designed to make decisions based on a probabilistic outcome from reviewing patterns, rules or data from the natural space.

In the case of Google, it’s an artificially constructed space, but is still one that is bound by rules and construction.

When we dove further into this, we had to ask ourselves: if AI can be better at this game than we are, then should we rely on AI to make the decisions for us? Or at least show us their decisions so that we can then be better at the decisions that we make?

Without our prejudices, biases, or otherwise impaired judgment, AI can make decisions based purely on data every time at any hour. And besides, it’s very difficult for us, to make judgment calls based on 300 different factors.

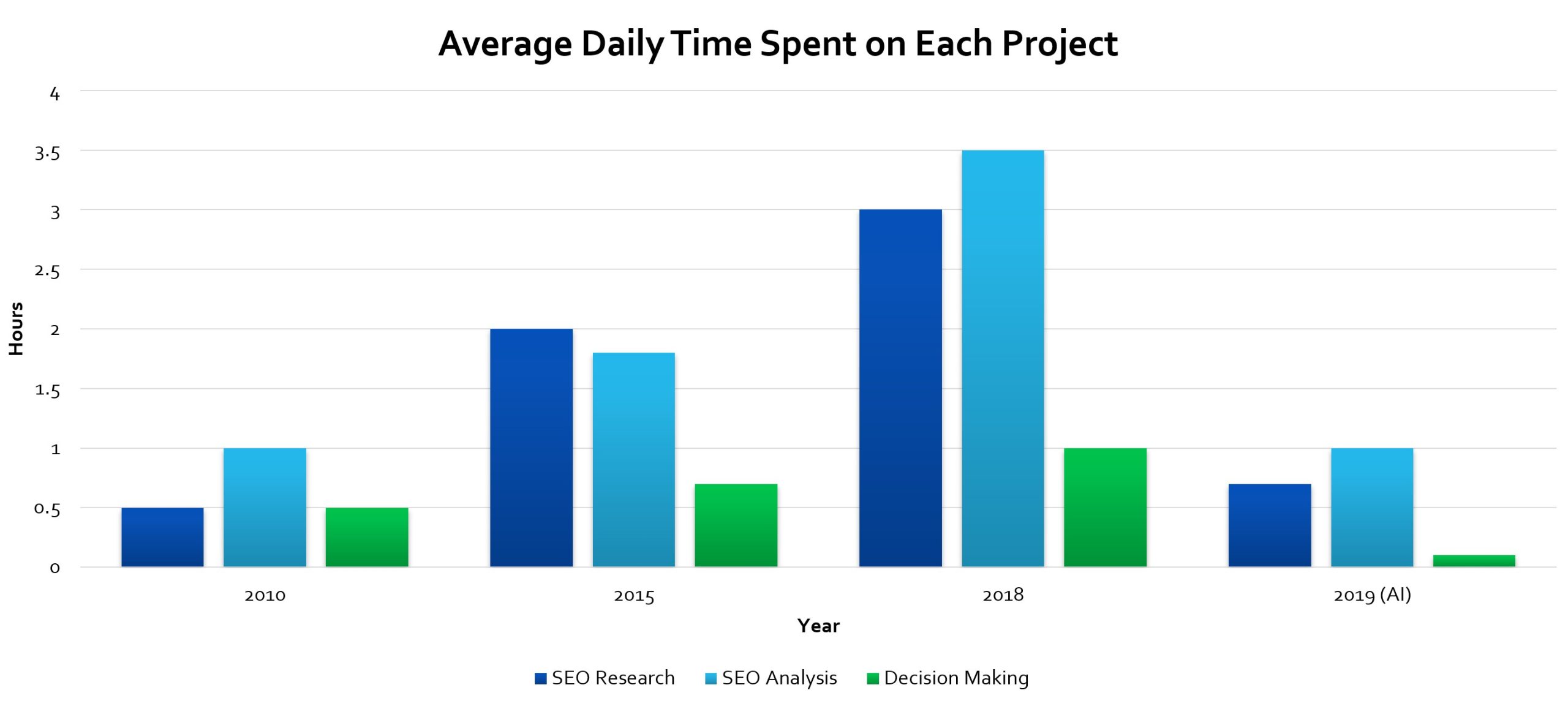

It is simply becoming a feasibility challenge for most agencies, freelancers, or experts in general to review every SEO factor in intricate detail. Unless you’re working on only a small number of sites or you’re working for a corporation where you can dedicate 100% of your time to only one or two projects, we have to make hard choices on how much time is spent on each project.

The “Analysis Dilemma”

Most agency owners that we have spoken to don’t have the type of resource where they can hire teams of SEO analysts to perform the due diligence necessary to handle deep analysis into every SEO project. Some agencies just make it very clear how many hours they can dedicate to a project each month.

What happens is that agencies or freelancers mostly rely on automation tools like SEMRush or Ahrefs (which we do too) to point out the most obvious issues and generally stick to the latest guidance on SEO strategies based on what they know is working or not in Google search.

For the most part, a “top-of-mind” method can still work pretty well, but they have drawbacks too. For one, you cannot adapt fast enough when Google algorithm changes happen or when there’s a big fluctuation in rankings. Or if your decisions are wrong, then you have to backtrack and do something else to improve your rankings.

The other problem is what is considered the “analysis dilemma”. That is, you only have a certain amount of time in a day or you only have a limited amount of people and resources available to analyze a particular project before it becomes economically impossible.

As a result, agencies and freelancers often have to make hard choices. How much time can you spend on a project before it is no longer worthwhile? In 2018, SEO Vendor found itself at the very limits of what we can do for each project.

If we spent too much time on any particular project, that cannibalizes time away from other projects. We, therefore, had to come up with a solution before we undermined our client’s SEO performance.

SEO Predictive Machine Learning

SEO Vendor had been developing proprietary CORE analysis technology long before the “analysis dilemma” became a problem. However, CORE analysis was initially rules-based and wasn’t capable of machine learning. Towards the latter part of 2018, we experimented with artificial intelligence, specifically, neural networks. Much like how our brain contains neural synapses, an AI model based on machine learning simulated how our mind resolves problems.

AI has the benefit of being able to accommodate a large number of input factors and can problem-solve quickly in ways that humans can’t.

The idea was that if we understood SEO to be a type of predictive modeling in our minds, then having a machine do the same work is a matter of transferring our mental methods to a computer system.

In 2018, we invented a method based on machine learning and artificial neural networks to accelerate the process of SEO analysis so that we can work around the problem of the analysis dilemma.

Neural networks are based on a matrice of nodes whose values are not known until it has been trained with input data. With a trained data set, the neural network then patterns its behavior to operate on a new input to produce a different output.

In SEO terms, training an AI requires understanding a suite of factors that allows a site to rank high in search engines. Also, data coming from multiple sites help the AI to learn how factors influence rankings.

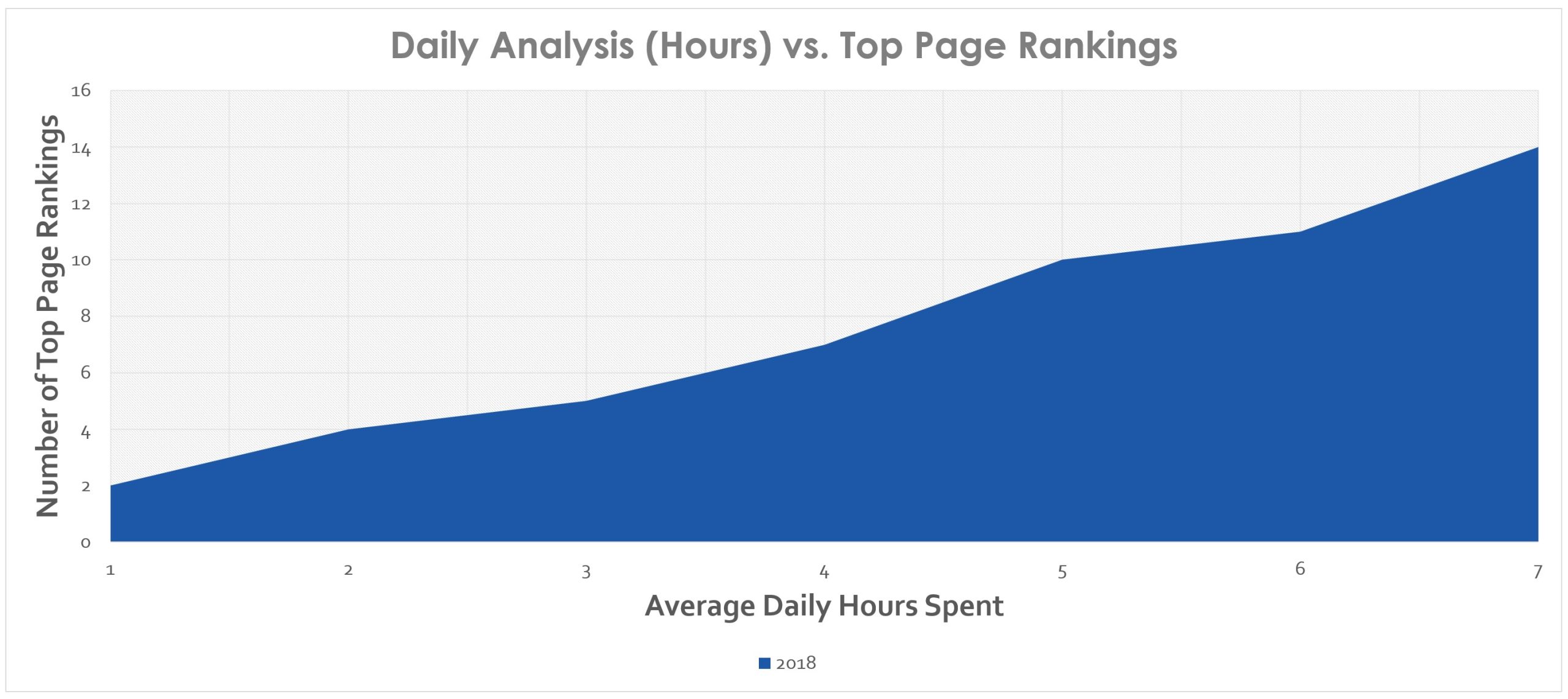

From the test results we obtained, AI had completely transformed our SEO analysis and solved the “analysis dilemma” problem for us. Up until 2018, SEO Vendor had to invest an increasing amount of time in SEO research, analysis, and decision making. By the height, we were reaching nearly 7 hours for strategy alone.

This was not counting the time and effort into content creation, or backlink building. It was purely the amount of time for SEO analysis. After AI analysis was introduced, we lowered our SEO analysis time to pre-2010 levels.

Predictive SEO Analysis

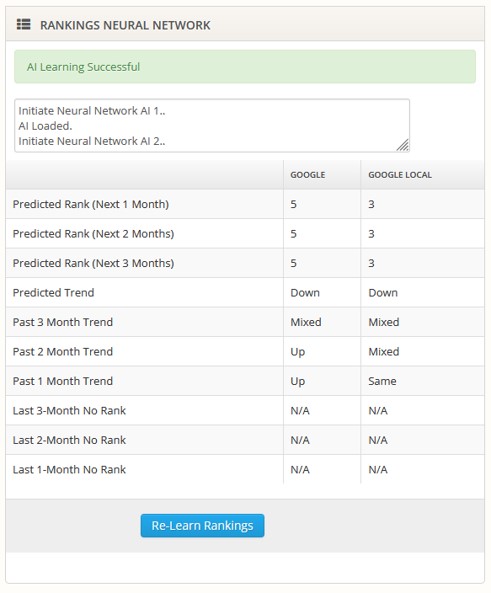

The most basic type of predictive SEO analysis on the market is what we consider trend analysis. This involves using historical data, such as rankings to predict a future outcome. The math is based on regression analysis and is a study of data trends generally based on linear or parabolic equations to extrapolate a future value.

This tends to be useful if we are looking at a particular trend, for example, whether rankings are trending up or trending down. We can use predictive SEO as signals for 1-month, 3-month or 6-month ranking trends. They serve as a guide to the general direction of where our strategies are headed. However, trends are less effective if we’re trying to make a branch decision about which SEO factors need the most attention.

SEO factors tend to reflect more so on the observation of top rankings and the qualities which can be extracted that appear to matter the most. And while that seems subjective in nature, we can look at these factors as being attributed to how well Google has decided to rank a website.

Instead of making any assumptions as to which ranking factors matter, the design is to allow artificial intelligence to decide based on a constructed pattern inside its neural networks in order to determine a ranking strategy going forward.

While we won’t go into the depths of how neural networks and machine languages work, let’s just say that the result of trying to use artificial intelligence to predict rankings using only historical rankings is limited in how valuable that information is.

Essentially even after many training iterations, even thousands, there’s only so much information gleaned from a single dimension of rankings.

However, that’s not to say it’s completely useless. Neural networks help us to understand predictive trends that are more useful over the long run. The more historical data that we collect, the better it is at gauging and understanding the behavior and strategy.

In essence, the system at its best can give us an understanding of future rankings and at its worst, it can show us if we have a chance of heading in a negative direction. This information alone can be critical to understanding which way the ship is headed. If rankings are headed in a downward trend, then at least it provides some early warning that we should rethink SEO strategies.

Branch Prediction AI and True SEO Learning

Using neural networks to predict future rankings based on only rankings data is not the most useful way to use artificial intelligence. A better way to acquire higher rankings from machine learning is to actually have it study the factors that attribute to higher rankings rather than the rankings themselves. For example, let’s take a look at the factors listed here.

- PA – Page Authority

- DA – Domain Authority

- Links – Number of Backlinks

- MozRank – Moz Rank

- URLTotalBASECount – Total Base Keywords in the URL

- TitleCount – Number of characters in the Title

- TitleWordCount – Number of Words in the Title

- TitleEMTCount – Number of Keywords in the Title

- TitleTotalBASECount – Number of Base keywords in the title

- MetaCount – Number of characters in the Meta description

- MetaWordCount – Number of Words in the Meta description

- MetaEMTCount – Number of Keywords in the Meta description

- MetaTotalBASECount – Number of Base keywords in the Meta description

- H1Count – Number of characters in H1’s

- H1WordCount – Number of Words in H1’s

- H1EMTCount – Number of Keywords in H1’s

- H1TotalBASECount- Number of Base keywords in H1’s

- H2Count – Number of characters in H2’s

- H2WordCount – Number of Words in H2’s

- H2EMTCount – Number of Keywords in H2’s

- H2TotalBASECount – Number of Base keywords in H2’s

- H3Count – Number of characters in H3’s

- H3WordCount – Number of Words in H3’s

- H3EMTCount – Number of Keywords in H3’s

- H3TotalBASECount – Number of Base keywords in H3’s

- BodyCount – Number of characters in the body text

- BodyWordCount – Number of Words in the body text

- BodyEMTCount – Number of Keywords in the body text

- BodyTotalBASECount – Number of Base keywords in the body text

While this only serves as a non-exhaustive list, we can imagine that an AI system allows us to enter as many variables as we would like to factor in. For any particular keyword, we can examine the top 10 sites and their attributes for these factors.

The reason why we choose the top 10 sites is that those sites contain the attributes that Google has decided to rank the highest.

A set of neural networks are activated to train the AI to learn from each of the factors we have listed for each of the 10 sites. Training a neural network requires iterations. The more iterations we run, the better the AI will be at converging on a reliable output.

Tweaking and tuning the AI until it comes to a reliable conclusion requires a lot of trial and error. We have to keep in mind that neural networks are general purpose. There’s nothing particular about them that’s SEO-related.

An AI unit cannot tell us the exact SEO factors that matter the most to Google. Instead, we can imagine the inside of the neural network to be a black box.

What comes out of the AI analysis is an observation of how the SEO factors influence the position of a site’s ranking.

To understand which factors had an influence, we have to compare the AI output with the input factors, and then relate them based on further gap analysis.

AI Keyword Memory Banks

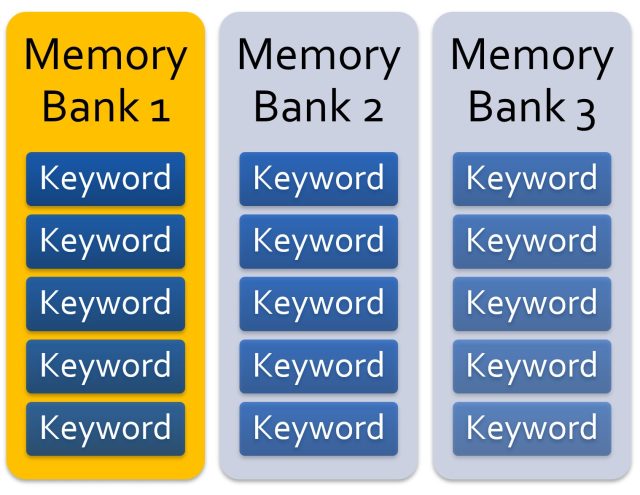

Once an AI unit has been trained, we can take a snapshot of its neural network and save it into memory bank data sets. Each memory bank stores a specific set of neural networks that are trained for one specific keyword. If we wanted to train the AI to understand all keywords in a project, then we would train an AI unit on each keyword and store the resulting training in memory banks one by one.

We observed that this was the only consistent way to train the AI to produce reliable results. The problem we had to solve was that any AI solution we built had to keep up with the vast complexities in Google’s algorithm.

Imagine if we had to develop an AI that could determine the outcome of rankings for any keyword. While storing all training in one AI unit is technically feasible, it would mean that we would need to develop an AI system capable of sampling ranking factors from billions of keywords multiplied by billions of sites.

Storing individual AI learning sets to keyword memory banks is a way to learn efficiently. We get to focus on the top sites that matter to a keyword without compromising on reliability.

This way, every cluster of neural networks is purposed for only one keyword. Think of it as an artificial micro-mind designed for a tiny SEO campaign.

SEO Results from Predictive AI Analysis

One of our biggest concerns in our experiments was how the results will turn out from our AI analysis. We have to bear in mind that the AI unit doesn’t rank our projects. A person is still involved in carrying out the execution of the plan. This allowed us to keep a careful watch over what the AI was expecting us to do, while still maintaining a sanity check on its recommendations.

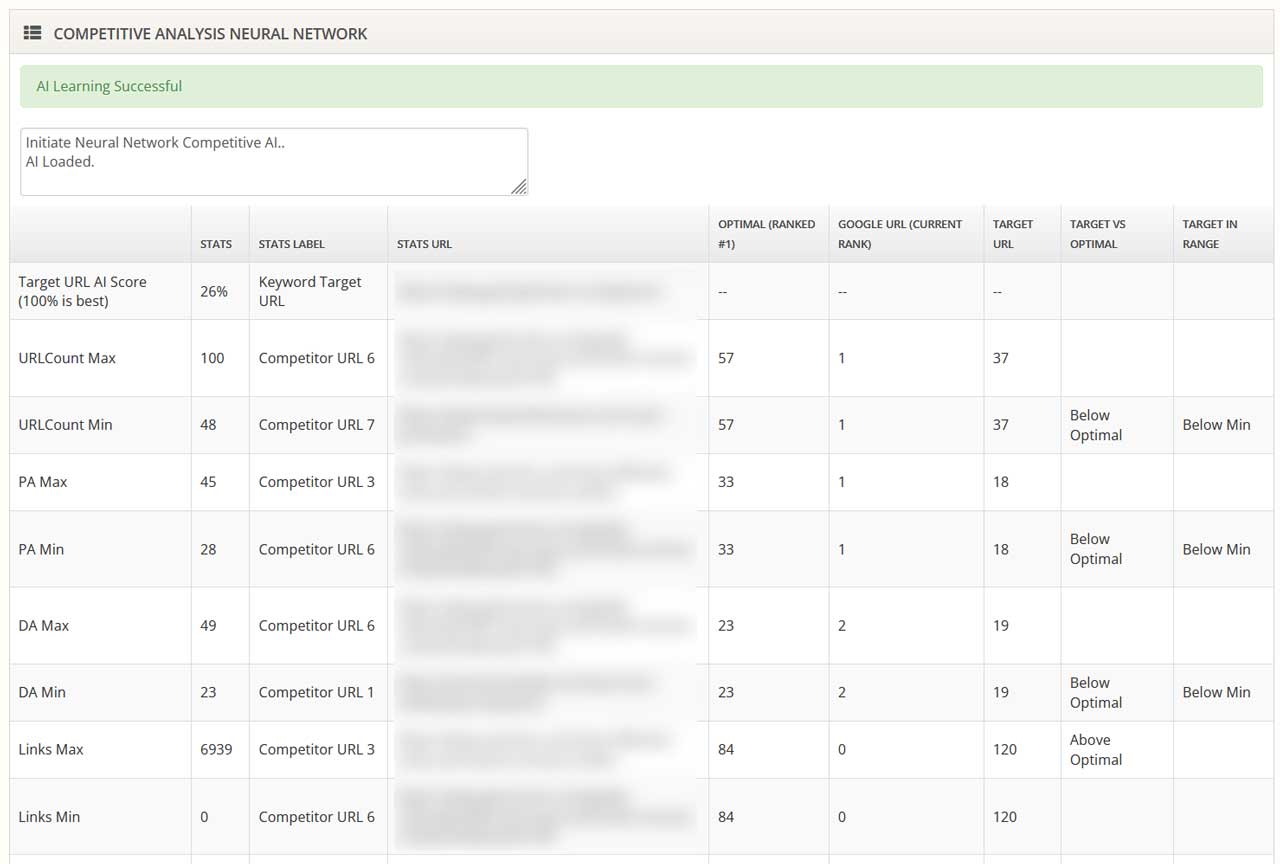

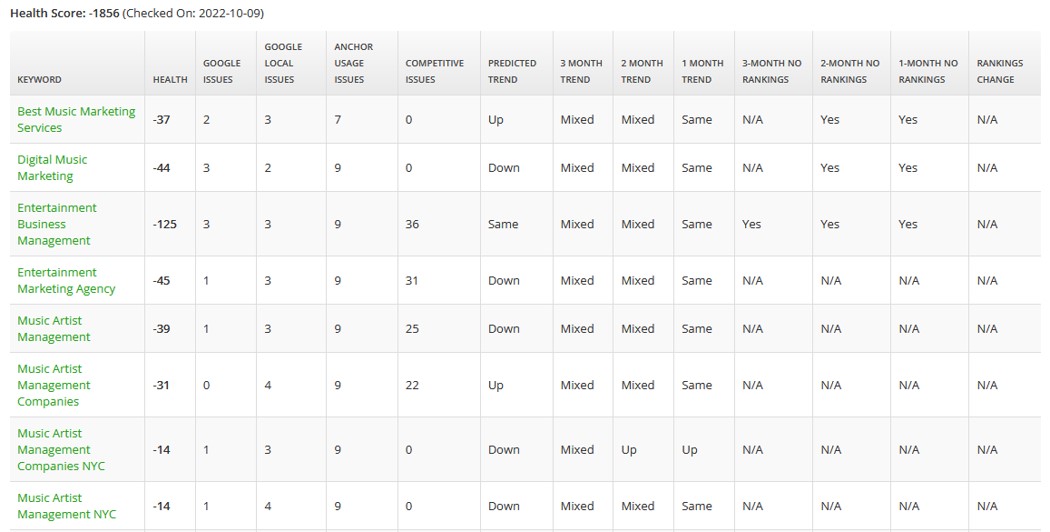

An AI algorithm doesn’t show us exactly which ranking factors mattered the most, but it tells us the gap between our target site and the sites ranked top by Google. We can then assert from the gap, which attributes are deficient on our site.

An automated competitive analysis feeds the conclusions from the neural network to data from the top 10 ranked competitors. The essential factors are compared to determine the gap in reaching an optimal SEO strategy.

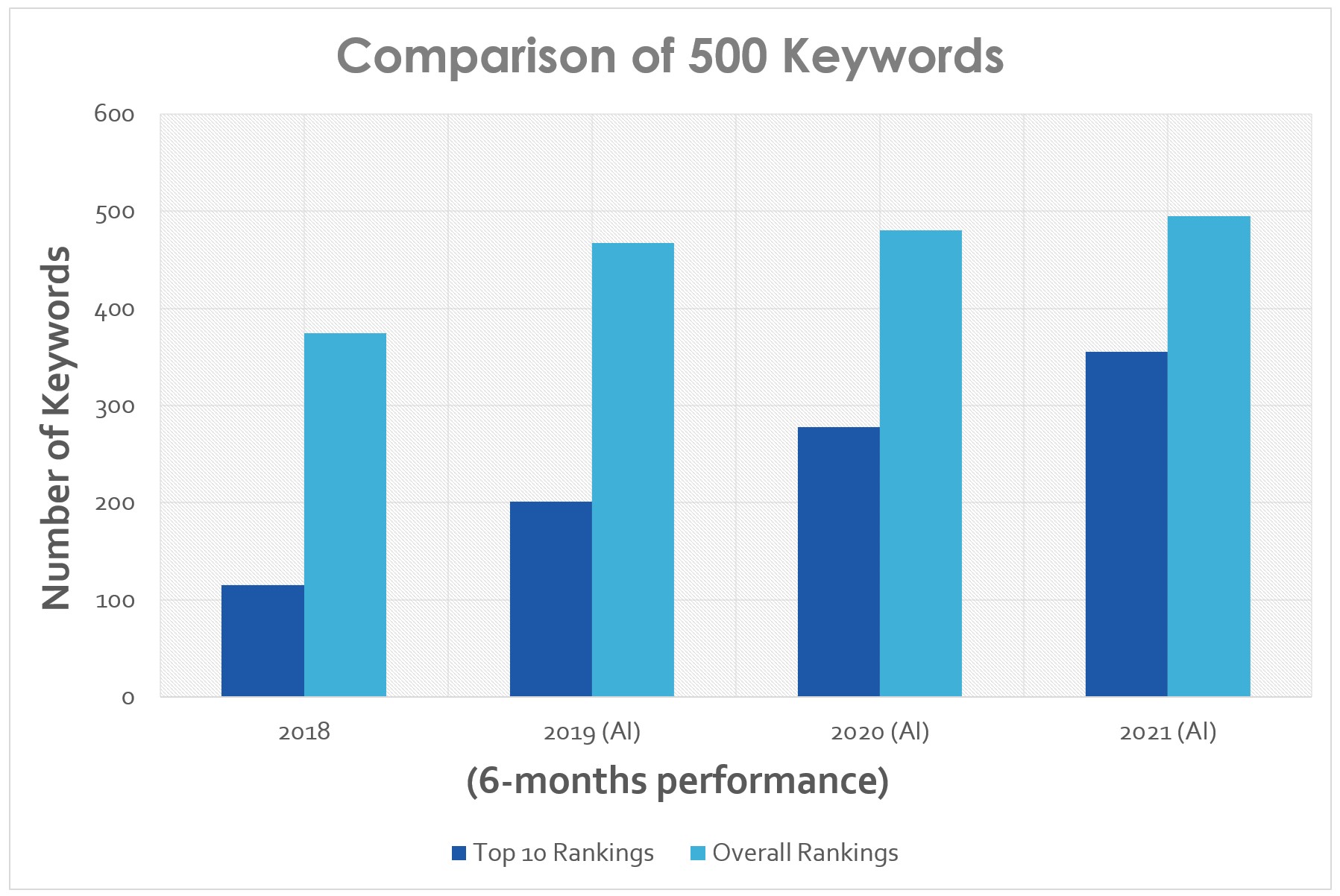

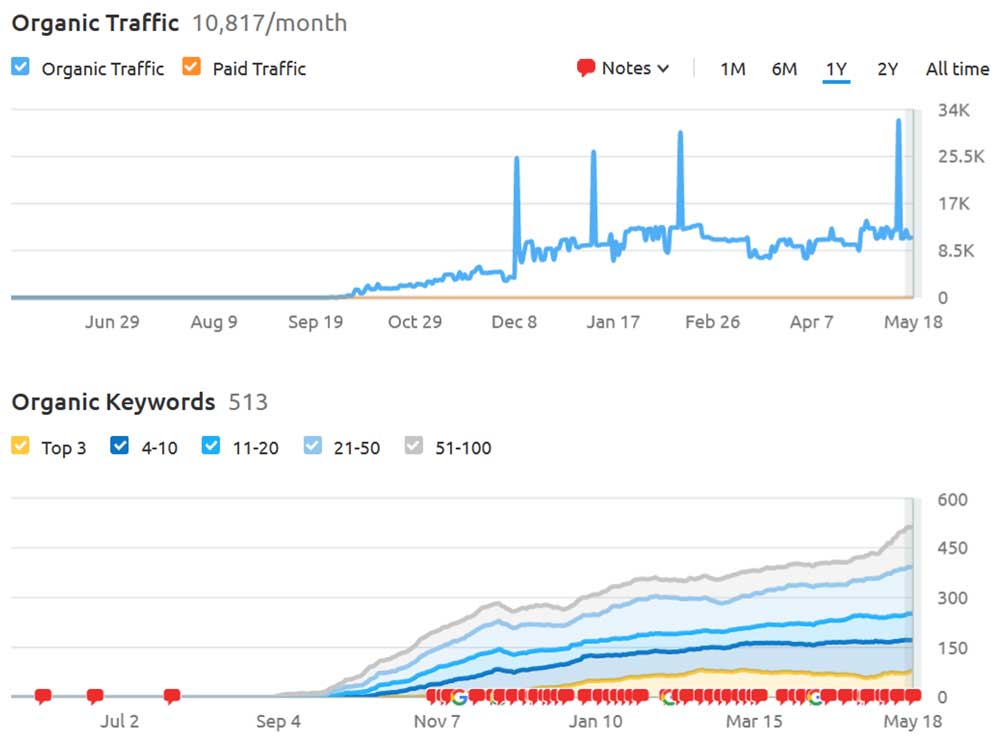

In our test, we collected 3 years of prior AI analysis data from 2019 to 2021 and compared it to 2018 (pre-AI) for 500 randomly chosen keywords from our projects. The results were staggering.

Prior to 2019, our ranking rate was 75%. Once AI analysis was introduced, the ranking rate improved significantly. By 2021, we were achieving a 99% ranking rate, meaning the vast majority of target keywords acquired a keyword ranking above 100 in 6-months or less.

Rankings for 6-month to a year improve even more.

Top 10 rankings also improved significantly. In 2018, we had a 23% top-ranking rate in the first 6-months. By 2021, the top-ranking rate improved to 72%, almost 3 times better than in 2018.

Additional Observations from SEO AI Analysis

Once a month we re-train the AI on every keyword for a project so that it can re-learn the top 10 ranked sites. By doing so, the AI can keep up with Google algorithm updates, changes in competitive sites, and any other ranking fluctuations.

We can also introduce new attributes at any time to allow the AI system to improve the accuracy of the output.

For example, if we were to put in 300 factors, we are likely to understand more about which ranking attributes matter the most.

In addition, if we were to expand the experiment by adding the top 100 rankings, we would also be able to acquire a bigger magnitude of ranking changes. This is actually important to understand because machine learning is more reliable if we provide it with more data.

The larger the data set, the more accurate behavior we’re able to acquire from machine learning.

In one scenario, an ecommerce site that we developed started on a new domain with no backlinks and no history of any kind of online presence. In a span of 6 months, the site’s organic traffic grew to over 10,000 visitors per month, and revenue was reported by the client going from $0 to over $30,000.

An AI can help to quickly analyze a sudden drop in rankings and identify key attributes to raise rankings. In our “Eye in the Sky” system, the AI provides our team with a scoring system based on a quantifiable number of issues that it has identified as systematic gaps.

SEO Vendor is continuing to advance research into predictive SEO and AI analysis. Our SEO AI analysis and predictive learning methods are part of the patent-pending technologies available to all of our agency members. If you have a question about our AI technology or how your agency can utilize AI for your clients, feel free to contact us at any time.

2 comments

Barbara Kline

October 24, 2022 at 3:44 am

Amazing article! I didn’t know that this AI technology thing now can be this advance and helpful to anybody in this industry.

Maximilian Streich

November 23, 2022 at 12:13 pm

In the future, AI will help marketers and SEO professionals analyze more data than ever before. This article breaks down how to handle the shift to AI in your marketing strategy.

Comments are closed.